During CAF LTAR’s DataCAFe, Dr. Eric Russell led a discussion on working with data from different sources and ways to avoid issues. He used his experience working with 18 LTAR sites as a way to illustrate his points. His write up is below.

As a co-lead processing eddy covariance (EC) data for an active working group within LTAR, the division between those who are familiar with larger time-series data (i.e., eddy covariance data), compared to those who are less comfortable with time-series based data sets is apparent. An issue that arises from synthesis work is not just getting the data from the appropriate persons but in a format that is usable and does not require a large effort on the part of the synthesis group to homogenize. With the focus of LTAR toward network-level science and the number of working groups, an active data-based culture is needed. From my perspective, two simple items can make life easier for cross-site synthesis regardless of the data sets used:

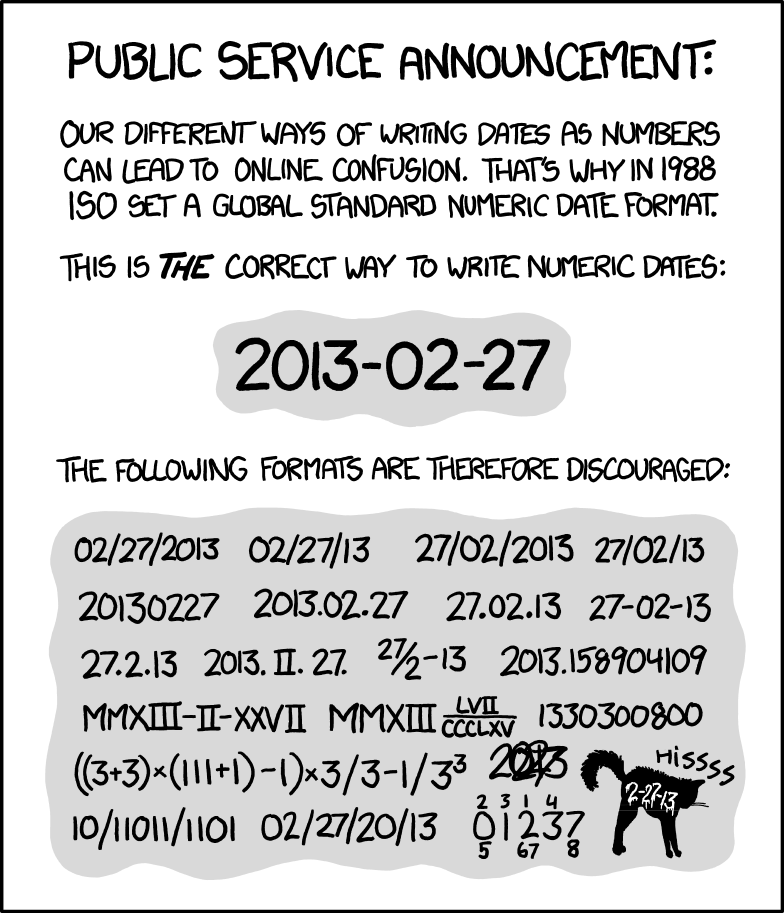

- Consistent and standardized time format following ISO standard 8601.

- Data dictionaries for the initial data requests with expected headers and units.

With time series data, a big hang-up is formatting time. Time is a problem. Different coding languages and processing programs produce and can handle multiple time formats. However, some formats are easier to handle than others. The ISO8601 time standard (e.g., YYYY-MM-DD hh:mm:ss) is a good format since it is a universally recognized standard and avoids confusion of order (Figure 1). When working with multiple data sets with different timestamps, it takes considerable time (and effort) to sort through each file manually to determine how time is labeled and how to convert to a consistent format. The goal is to be able to run data analysis and processing scripts in large batches; not handle each data set individually.

Figure 1: XKCD applies to everything.

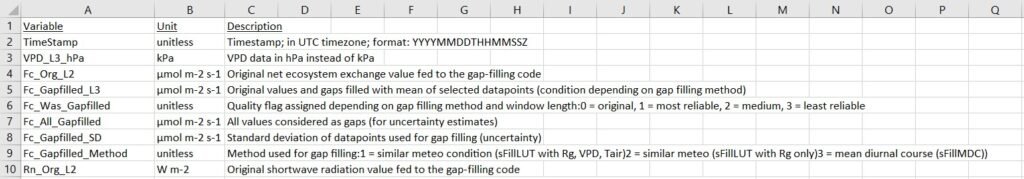

For the headers, converting column names and units is a relatively trivial exercise, except when the headers come with no explanation or units listed. This is where data dictionaries are really handy. The requesting party in theory should include this in their request, either explicitly or implied through asking submissions to follow a particular data format. A data dictionary in its simplest form is a list of the column headers, their units, and a brief (1 sentence) description of the variable (Figure 2). The less time spent manually exploring the collected data-set, the more time can be spent on the data analysis (i.e., the fun part). Everyone has their own lexicon for the different types and sets of data so having a data dictionary for the requested data is important to bridge across different disciplines.

Figure 2: Example of a data dictionary

Synthesis work takes a number of different people and a high level of coordination but the results are worth the effort. Not everyone has the same experience with different data sets; some disciplines are “forced” into more rigorous data management structures by virtue of the data used but everyone can benefit from even simple data management systems.